Leo, ethical chatbot

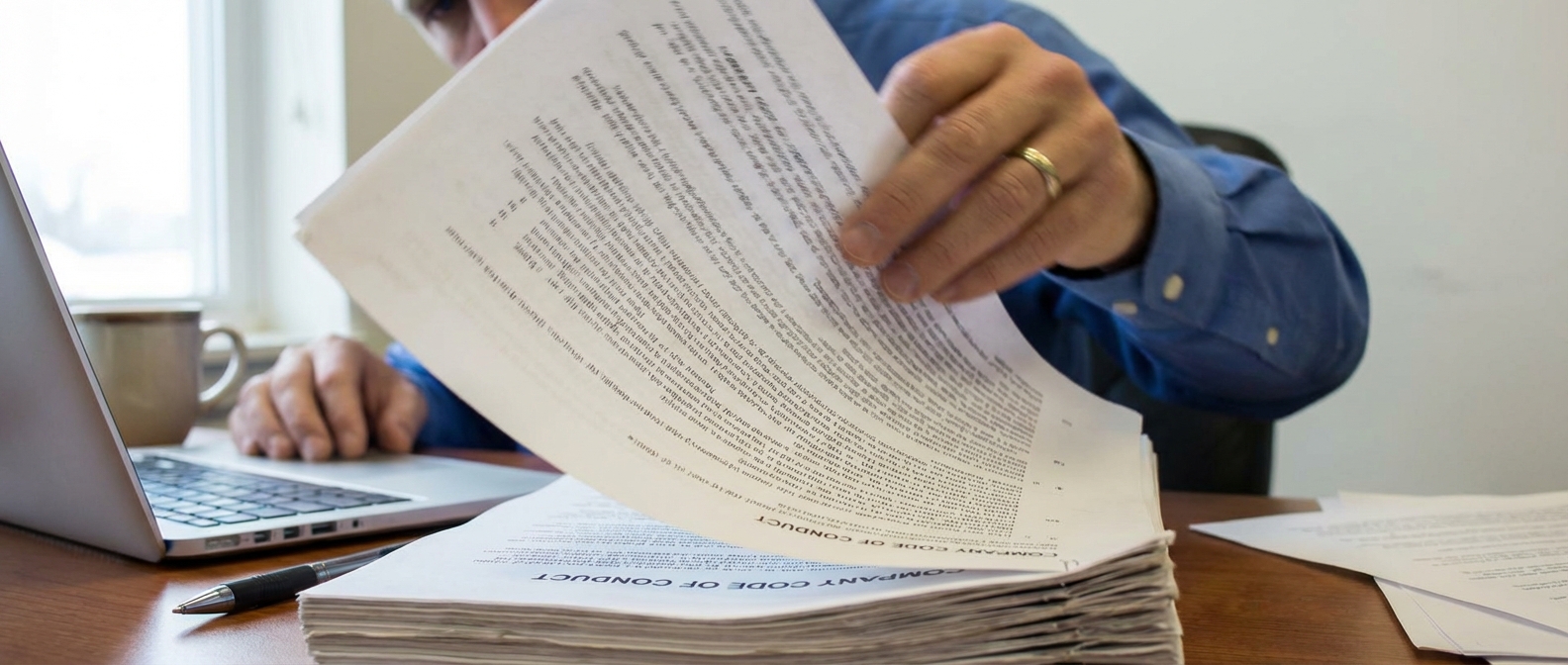

Employees face ethical dilemmas under time pressure, yet traditional Codes of Conduct are long, static, and difficult to navigate in the moment. When guidance is hard to access, uncertainty delays action and small issues can escalate into real risk.

Leo solves this by transforming a static ethics manual into a policy-grounded AI advisor. Built on a structured knowledge base and bounded by company rules, it provides instant, conversational guidance while reinforcing proper reporting channels. The result is a modern, scalable prototype that turns passive compliance documentation into an accessible, on-demand decision support tool.

The challenge

Organizations invest heavily in Codes of Conduct to define expectations and reduce legal and reputational risk. Yet research from LRN’s Ethics & Compliance Program Effectiveness Report shows that requiring employees to read and sign a policy does not guarantee ethical behavior in practice. Signed acknowledgment does not equal internalization.

In large companies with thousands of employees, Codes of Conduct often span 80 to 100 pages, covering conflicts of interest, harassment, data privacy, and reporting procedures. While comprehensive, these documents are dense and difficult to navigate under time pressure. Research from Ethisphere’s Global Ethics Survey reinforces that ethical culture depends on accessibility and clarity, not just documentation.

The core problem is not the absence of guidance. It is access at the moment of need. When employees face real dilemmas, searching through a long manual is unrealistic. The challenge was to transform static compliance documentation into something immediate, conversational, and decision-focused.

Designing a solution

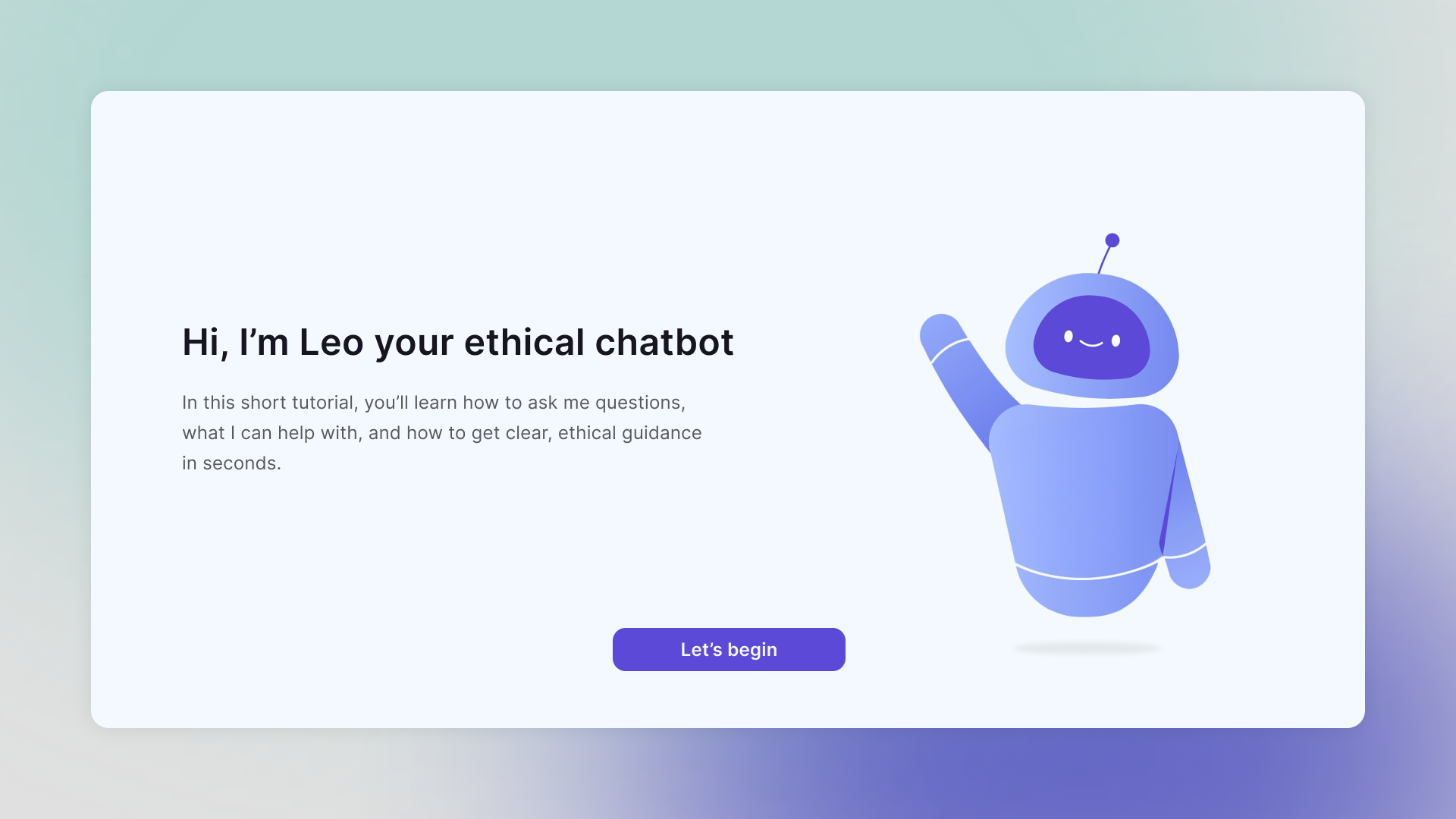

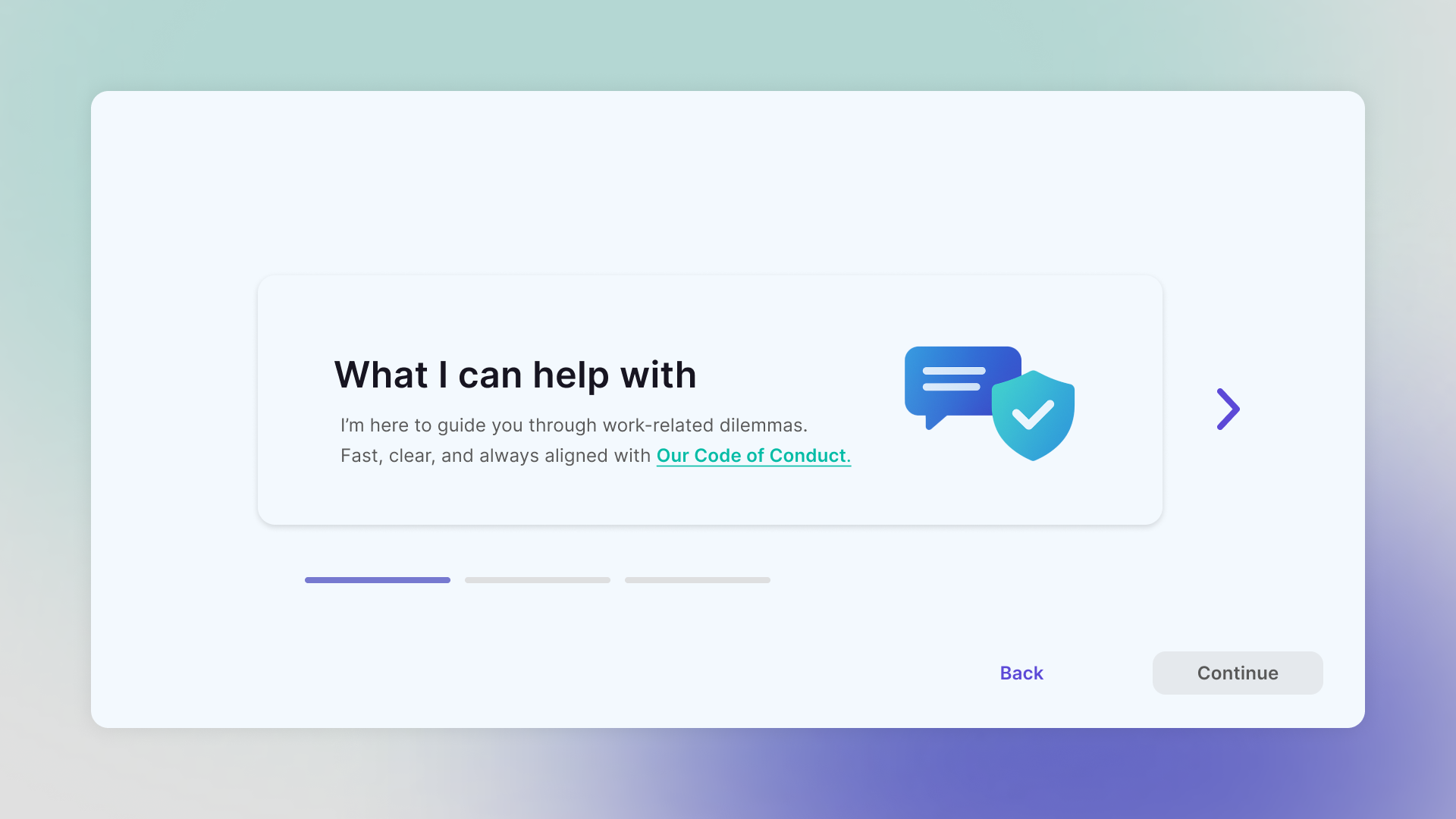

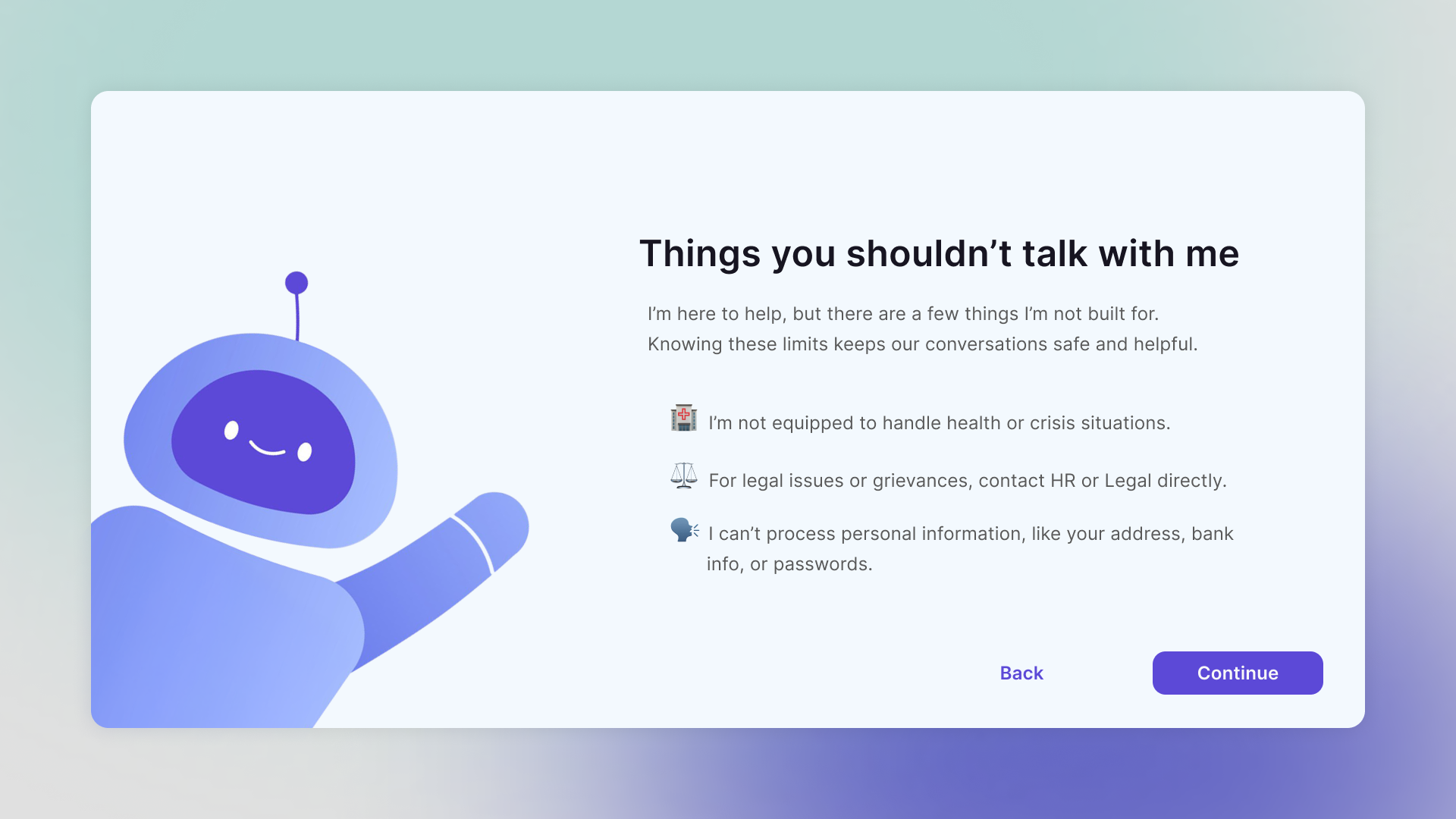

I approached this as a product design problem first: how do you make ethics guidance feel immediate, calm, and usable, without turning it into a risky “open AI” chatbot. I took inspiration from Microsoft’s onboarding experience (clean layouts, soft gradients, glass-like transparency) to create a neutral but friendly interface. Leo’s minimal 3D robot avatar sets the tone: approachable, not playful, and serious enough for compliance.

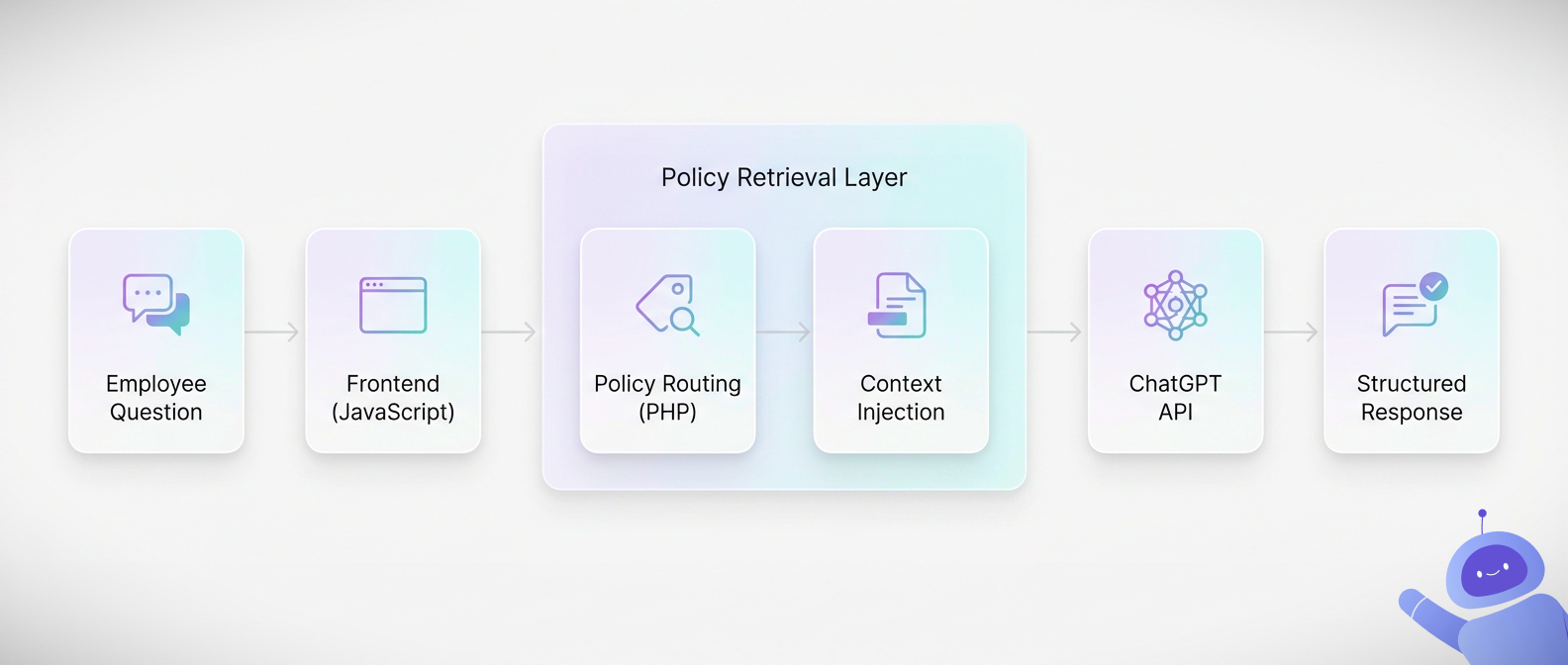

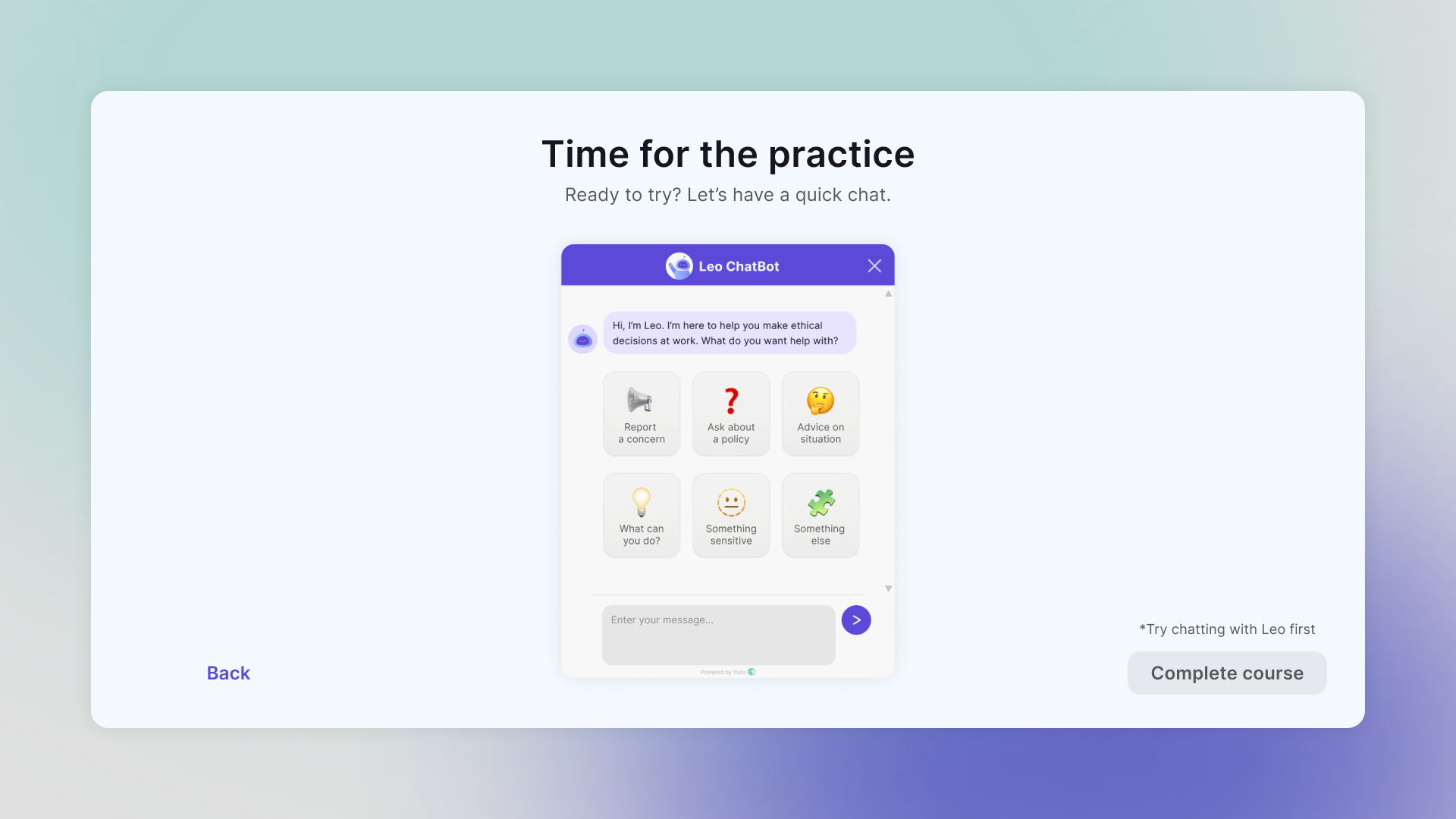

Next, I structured the content like a real company would. I wrote a full Code of Conduct for a fictional organization and broke it into 18 topic files (workplace conduct, reporting, gifts, data protection, conflicts, etc.). A simple PHP mapping layer then links 500+ keywords and phrases to the right topic, so the system can route a question to the correct policy area without searching the whole manual.

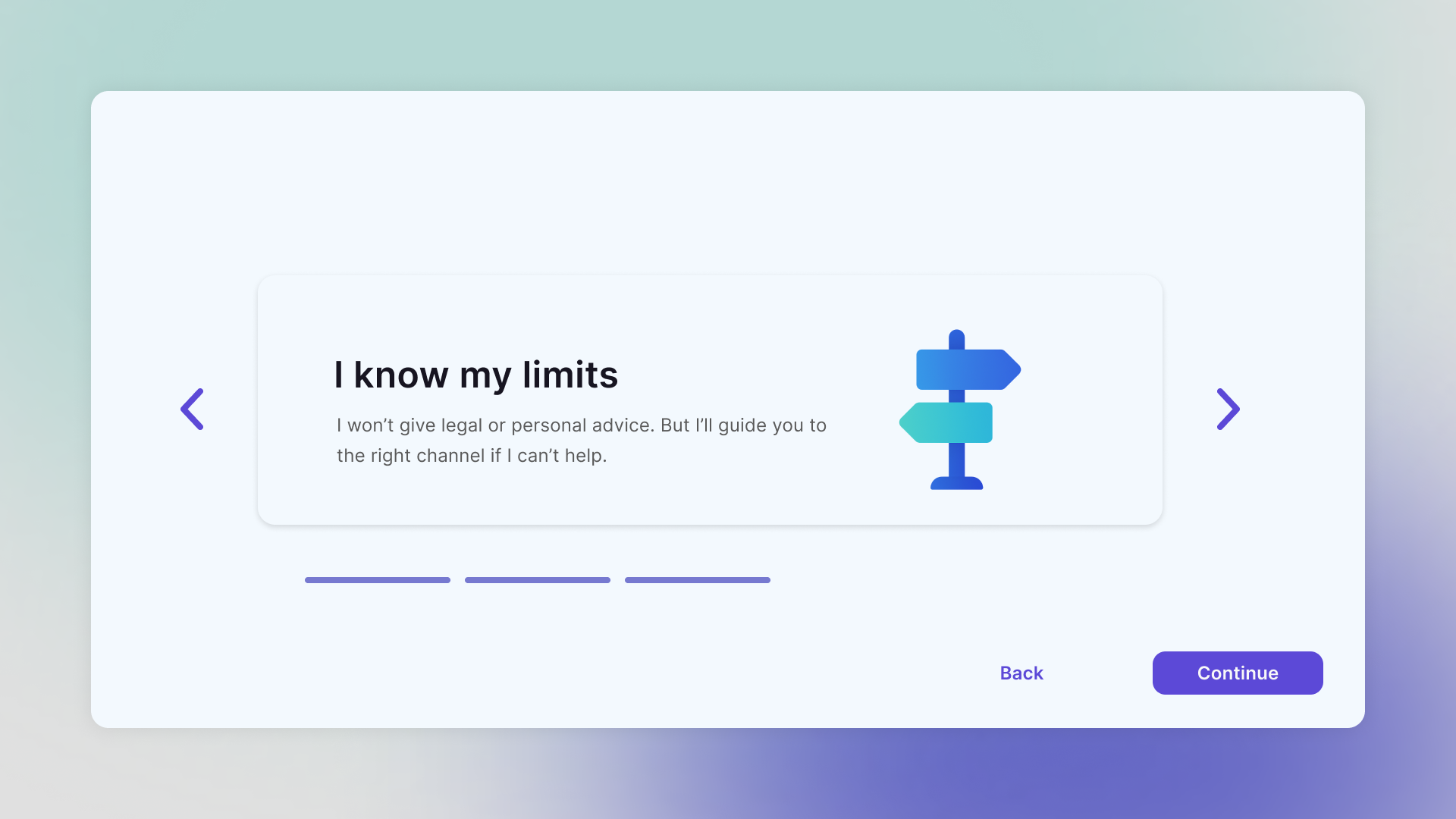

From there, I built the “safe answer” flow. The frontend collects the user’s question and sends it via AJAX to a PHP backend. The backend selects the best-matching policy file and passes only that excerpt into a fixed ChatGPT API prompt. The prompt forces a consistent response structure (acknowledge, clarify the ethical principle, suggest an aligned action, and redirect to HR or Legal when needed). If the question is outside scope (legal advice, personal decisions, judgment of intent, therapy-style support), the backend intercepts it before the API call and returns a controlled fallback.

Finally, I designed the conversation behavior so it stays readable. Responses are capped to stay concise, split across multiple message bubbles when needed, and never exceed a set maximum number of bubbles. This keeps the interaction scannable, avoids walls of text, and mirrors the real goal: quick guidance at the moment of doubt, not a lecture.

The result

The impact: From static policy to active guidance

Leo transforms a 100-page Code of Conduct into an on-demand advisory system. Instead of expecting employees to remember clauses or search through dense documentation, it provides structured, policy-grounded answers in seconds.

This shifts compliance from passive acknowledgment to active decision support. The goal is no longer “I signed the document,” but “I knew what to do when it mattered.”

The reach: Built for real workplaces

The interface is role-agnostic and accessible to employees at any level, regardless of technical background or seniority. Its conversational tone lowers the barrier to asking sensitive questions, while structured escalation paths reinforce formal reporting channels rather than replacing them.

Because the knowledge base is modular, it can be adapted to different industries, departments, or geographic regulations without redesigning the system from scratch.

The architecture: Responsible AI by design

Under the hood, Leo operates as a bounded advisory engine. Keyword mapping, thematic chunking, controlled prompt injection, and fallback interception create a governance layer that limits hallucination and enforces policy alignment.

This is not an experimental AI toy. It is a structured compliance interface designed for scalability, safety, and clarity.

Project screengrabs

Looking for this level of quality?

I help teams build scalable, effective and engaging learning solutions. I’m currently available for new opportunities.